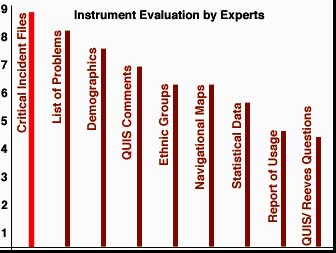

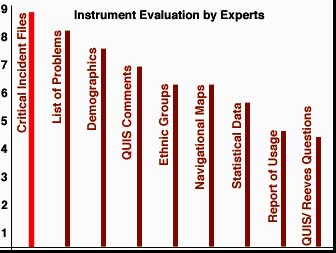

There was a consensus among all experts in this research that the multimedia files with critical incidences were the best of all instruments utilized in this evaluation. This result was somewhat surprising, considering that these files consisted of simple audio segments and screen shots, without the action of the mouse movement being captioned, since digital video was not available in that time of this study. This indicates that new technologies such as DVD will allow very efficient means of conducting usability inspections, which is perhaps the main finding of this dissertation.

Another instrument well received among the experts was the list of problems. This document, which was rated second best, presented a contextual list of problems, with descriptions and locations of problems, as well as incidences. See a portion of this list below.

| Location | Problem Type | Description | User ID | Incidence |

|---|---|---|---|---|

| Screen 9 (tutorial) | Instructional | Formula Confusing | User # 10 (USA) | 8 testers (40%) |

| Screen 2 (practice) | Interface | button "resume" misleading | Expert # 2 | 12 testers (60%) |

| Screen 4 (objective) | Programming | password message | USer # 3 (India) | 2 testers (10%) |

Both the QUIS and Reeves & Harmon questionnaires were rated low by the experts in relation to other instruments. A plausible explanation for weak ratings could be the lack of context these instruments presented. The usefulness of these tools seemed to be limited according to the opinions of the experts.

The other instruments and procedures were rated in between these two poles. It seems that the higher the contextualization of the instrument, the higher the ratings they received. This trend indicates a preference towards more qualitative instruments by the experts.